Context {length} Matters

What now.. API Budget Overrun? Rants on Cognitive Neuroscience? Discussions of Computational Intelligence?

Saturday morning means it's time for a blog post about "The Ten Stages of Ai/ML Engineering" 💖 .. aka "Stop wasting money", or the classic "No one tuned any caches?"

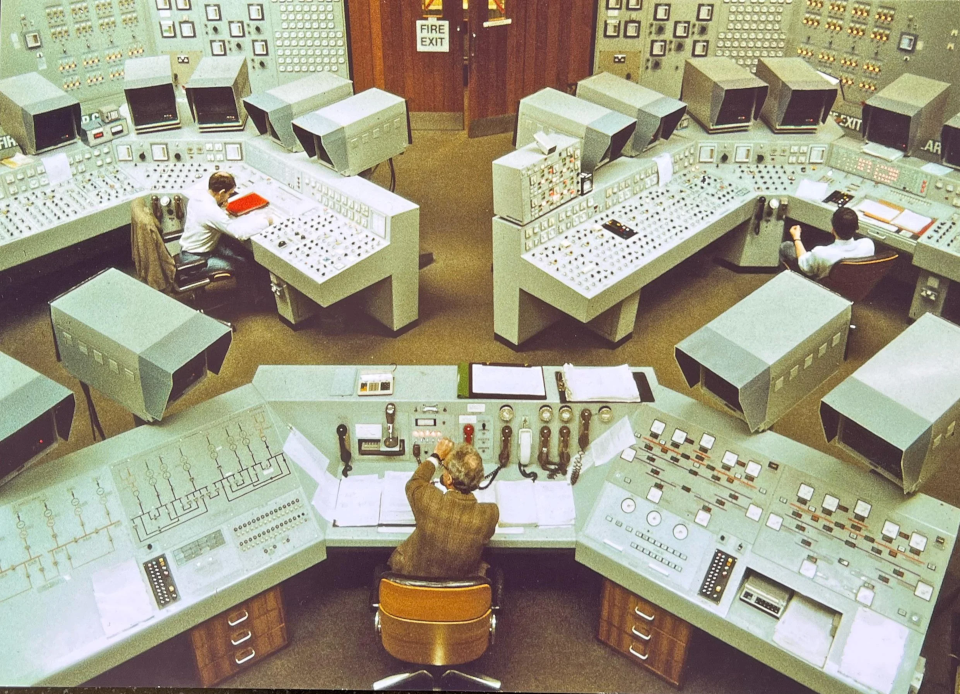

These are SELECT NOW(); as well as antiquated concepts from my OLTP/OLAP Data Architect era jobs; where so much of the core knowledge translates to "LLM Key/Value Cache Architectures" that Directors always get confused when they've not had the same experiences. Anyway, about those Neural Nets...

Classic Neuroscience example: "I'm having a conversation, I'm sleepy, my brain will only take in single simple sentences with one action and one request at most; anything further will be silently discarded or cause Brain Queue.

We all know the feeling of a sluggish Brain Queue, where our sleepiness limits comprehension, limits attention span, and limits the amount of sensory input capacity for decision making.

Having insufficient resources, like attention or short term memory, can result in the mind becoming stuck in a Consideration Loop leading to "Sleepy-Faced Analysis-Paralysis". This is where the brain is too tired to comprehend the full cognitive pathway required to exit from the loop.

When working with on-premise or public-API based LLMs, engineering choices must be made, specifically the First-Stage task of "choosing the correct model".. and more generally with decision making processes where the length of associative (contextual) information is a requirement.

The TL;DR .. Sure, Time's'a Wastin'

How about some quick blurbs from real human-to-human conversations, sourced from my career path right up through this past week. There are a few decently representative examples of short context vs long context.

She's too verbose. She seems shy and quiet. She talks too much. She doesn't go deep on technical details. She goes on tangents and my attention span is too short to see the larger picture. She's hard to read. She's too open about everything. She's difficult to "get into the weeds with". (that last one is my new favorite).

It's always a double standard; I'm used to it, contextually speaking.

- Context length is the amount of text an LLM can see at once.

- CTL affects response quality, server specs, latency, API, cost.

- A longer window can help the model reason across more information, but it also makes the system heavier to run.

- Treat context length as a core system requirement always.

- Define it, test it, document it, monitor it, know it, love it.

- Adjust it based on real usage. It will be chunked, split, cached.

What's this about Burning Runways?

But before we do, and because everyone loves to compltain about 💸... it's really in ones best interests to understand how those bills become a reality, especially right now in the industry when "courting adoption" can be considered a write-off vs "chasing profits", except for some (oooh Claude, you're not being ethical lately).

Rewind from LLMs to the SaaS era... just look at real world stories of situations where applications were not controlled on their Context States, leading to tens and hundreds of thousands of dollars in SaaS Application API bills:

- $738.420, "I subscribe to Vercel Pro for $20 per month. I also added a spending limit of $120, so no nasty surprises, right?"

- $72,000.999, "We Burnt $72K testing Firebase + Cloud Run and almost went Bankrupt"

- $120,000.420, "Cloudflare took down our website after trying to force us to pay 120k$"

What is Context Length?

Context length, sometimes called window size or max tokens per forward pass, is the number of tokens a model can attend to at once. It directly limits how much raw text, user history, or retrieved knowledge can be processed in a single inference step. Our brains do this constantly, we infer and make decisions based on memory and situational context of data, and neural networks do too.

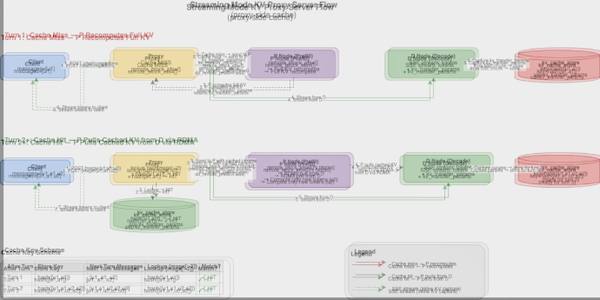

Since every engineering stage ultimately decides what the model sees and how much it costs to see it, context length is a cross‑cutting quality attribute. Below we walk through each first‑stage task (including the critical "Iterative Revision" stage) and explain how important context length is, what engineering implications arise, and what concrete actions you can or should take and why.

For comparison analysis, click through the data table below to see model specific specifications, including Context Length, for various Open Source models (these are not the latest LLMs, so go look at HuggingFace if you want more info).

Context Length: What It Changes

So, you want it hot and loose or you want those warmed-up caches pre-primed from snapshot slapshots to the top-layer of the mesh?

"Sure, but how much can the cache layers (there's more than three) handle before we overload the cluster with memory pressure or downstream write amplification?"

Small Context Windows Are Great, Longer Also Yes!

- A small context window is fine for short prompts and mobile devices with NPUs that are fundamentally different from large GPUs like the H200 or MI300... or inference clusters serving thousands of them.

- A larger window lets the model work across longer documents, multi-turn conversations, codebases, contracts, research notes, support histories, or anything else where the answer depends on more than one paragraph of information.

That does not make longer context automatically better. It makes the system more capable, but also more expensive and more operationally sensitive.

Longer Context Often Requires More Resources

Yeaaaah, but we're not talking about Gemma4 on LiteRT, this is a conceptual discussion for general LLM engineering. So...

- Longer Context = More memory pressure

- Longer Context = Higher GPU requirements

- Longer Context = Higher cost per request

- Longer Context = More complex data handling

- Longer Context = Requires more testing for latency, stability, and output quality

Context length is not just a model feature. It is an architecture decision.

Why Context Length Matters Across the Whole Project

Context length touches almost every part of an LLM project. It affects how we define the product, prepare the data, choose infrastructure, test the model, and manage cost.

1. Product requirements

Start with the real use case. Can the product work with short prompts, or does it need to process full documents, long chat histories, tickets, logs, reports, or contracts?

- That answer should be written into the project requirements early. For example:

The model must support at least an 8K token context window for production inference.

Without that requirement, the team may pick a model, GPU profile, or data pipeline that works in a demo but fails under real usage. Confusion!

2. Data collection

Long-context systems need long-form data. That may include:

- Full PDFs

- Long chat transcripts

- Complete contracts

- Support cases

- Technical documentation

- Source code repositories

- Meeting notes or knowledge base articles

The team also needs to confirm that the data can legally be stored, processed, and used for training or retrieval. This is especially important when full documents are involved.

3. Data chunking

Most long documents still need to be split into chunks. The goal is to avoid cutting the text in a way that destroys meaning.

A good chunking pipeline should:

- Keep related content together

- Avoid splitting sentences or sections awkwardly

- Add overlap where needed

- Track source metadata

- Stay within the model’s context limit

This is where a lot of long-context quality is won or lost. Bad chunking creates missing facts, fragmented answers, and unnecessary API calls.

4. Infrastructure

Longer context needs more memory, and therefore more money, more electricity, more attention to... you get the idea. It means larger GPUs, more careful batching, tighter monitoring, and better cost controls.

For example, moving from short prompts to 8k or 16k token workloads can change:

- GPU selection

- vRAM requirements

- Batch size

- Throughput

- Latency

- Autoscaling behavior

- Failure modes

The infrastructure has to match the context target. Otherwise the system may work in testing and fall over in production.

5. Model selection

Not every model handles long context well. Some models support long windows natively. Others can technically be extended, but quality may degrade if the model was not trained or tuned for that range.

When selecting a model, the team should check:

- Maximum supported context length

- Quality at long context

- Retrieval performance

- Latency profile

- Memory behavior

- Positional encoding strategy

- Cost per request

The advertised context window is not enough. The model needs to perform well near the limit.

6. Experiment tracking

Context length should be logged as a first-class experiment variable.

Every run should capture:

- Context window size

- Chunk size

- Overlap strategy

- GPU type

- Memory usage

- Tokens per second

- Latency

- Cost

- Evaluation results

This makes it possible to compare short-context and long-context runs without guessing.

7. Testing and validation

Long-context bugs often do not show up with short prompts.

The test suite should include:

- Short inputs

- Medium inputs

- Maximum-length inputs

- Inputs near the limit

- Realistic long documents

- Multi-document prompts

- Stress tests for memory and latency

The goal is to verify that the model does not crash, truncate important information, hallucinate around missing context, or slow down beyond acceptable limits.

8. Documentation

Context decisions need to be documented. Future engineers should be able to understand:

- Why the context size was chosen

- How chunking works

- Where truncation can happen

- Which GPU profile is required

- How to increase or reduce the window

- What tests must pass before changing it

This prevents context length from becoming tribal knowledge.

9. Production monitoring

Once users are in the system, monitor the actual request lengths. Track things like:

- Average prompt length

- 95th percentile prompt length

- Maximum prompt length

- Truncation events

- Retrieval misses

- API call volume

- Latency by token count

- Cost by request type

If users start pushing against the window, that is an early signal. The system may need better chunking, better retrieval, caching, prompt compression, or a longer-context model.

10. API limits and cost behavior

Shorter context can create more API calls. If one user request has to be split into many chunks, the system may hit:

- Higher latency

- Higher request volume

- Rate limits

- More billed API calls

- More orchestration complexity

- More opportunities for partial failure

A larger-context model can reduce the number of chunks required for some workflows, but it may also increase the cost of each request.

The right answer is not always “bigger window.” The right answer is the smallest context window that reliably supports the use case.

The Practical Flow

For a real project, the workflow looks like this:

- Define the longest input the product needs to handle.

- Confirm the data exists and can legally be used.

- Split the data into chunks that preserve meaning.

- Choose infrastructure that can support the target window.

- Select a model that performs well at that context length.

- Track context length in every experiment.

- Test the model with realistic long inputs.

- Document how the window, chunking, and infrastructure work.

- Monitor real user request lengths in production.

- Adjust when usage patterns change.

This keeps context length connected to the actual system, not just the model card. It's super duper important so get it right! Test, then test more, and automate the tests, and have a validation LLM with reasoning and validation post-training awareness ... or you're gonna have a bad day.

What This Means in Practice

A longer context window can improve answer quality because the model can see more relevant information at once. It can also increase cost, latency, memory usage, and operational risk.

That tradeoff matters. For most production systems, context length should be treated like any other engineering constraint:

- Capacity

- Latency

- Reliability

- Cost

- Security

- Compliance

- Maintainability

It is not just a prompt design detail. It affects the data pipeline, model choice, infrastructure plan, testing strategy, and production budget.

Checklist for Your Future LLM Project

Before committing to a context length (and quantization), make sure...

- What is the longest input this product needs to handle?

- Are we working with snippets, full docs, or multi-doc workflows?

- Do we have the right to store and process the full source data?

- Are we chunking the data in a way that preserves meaning?

- Do we need overlap between chunks?

- Can the selected model support the required window?

- Does the model still perform well near the edge of that window?

- Do we have enough GPU memory for the target workload?

- Are we tracking context length in experiments?

- Are we testing short, medium, long, and maximum-length inputs?

- Are we monitoring real request lengths in production?

- Are we watching API limits, cache perf, tokens/ second, and cost?

If the answer to those questions is clear, the project is probably handling context length correctly. If the answer is vague, context length is still an unresolved architecture risk.