Why Do We Need Her Anyway?

You don't. Do it all yourself. Refuse Experience. Dive into failure.

Indeed. Do it yourself and ignore all knowledge, all advice. Build every wheel as new. Design everything from ground zero, always. Exist only in The Now. Push everyone away who advises otherwise - at your own peril. You are The Only Expert.

Choose your failure mode and you will find it quickly.

Please Skip the Philosophy

A valid request. Perhaps you don't care about this verbosity of thought, or considerations therein, or maybe you're aware of the reprisals and follies and want to move on to the engineering discussion? If so, simply scroll down to the section titled, "How Does This Relate to Memory". Note that in doing so you will miss a blurb from Office Space.

Endeavor to Endure the Philosophy

A fair degree of the human experience involves a process by which those of "early-phase cognition" attempt to self-obfuscate their "desire for desire", attempting to live a life of nebulous and fleeting happiness, non-blissfully and unaware that it is this desire to feel and experience desire itself which has cornered their experience into a lifetime of endless searching, yearning, wanting, never satiated, eventually growing old and stale and losing their imaginative or creative or physical edge.

This cycle of The Great Chase is one basis of Sisiphean frustration; one which - until it is surpassed - which requires being acknowledged, accepted, and integrated into their forward movement

Some understand that to exist with desire, that all-controlling force, seemingly inescapable force which presses down upon the rock, forever trapping your perspective into the wheel of the unknown yet forever traveled, repeating mistakes which others warned against, refusing awareness of one's own misfortunate mentality and guiding-line towards an inevitable failure; experiences from which all lessons are never to be learned.

Go ahead. Do it. Don't learn from others. Do everything yourself, do it with the veracity by which you have become convinced is correct - do everything as though this is the first time anyone has ever tried to achieve the new shiny thing. Surely, this must be the path towards truth, self-realization, self-actualization, self-assurance, and that desperation of desire for freedom from desire to desire everything... and then... surely success is ensured?

No, that modality of mentality would achieve its opposite effect, and it would be a waste of everyone's time; because despite the ego-driven main-character syndrome which has become so performatively boring in the past two decades of social media, it's not all about You, or Me, it's about Everyone alive and even those who we have loved who died.

Mr. Gibbons

Peter Gibbons:

It's not just about me and my dream of doing nothing. It's about all of us... Michael, we don't have a lot of time on this earth!

We weren't meant to spend it this way. Human beings were not meant to sit in little cubicles staring at computer screens all day, filling out useless forms and listening to eight different bosses drone on about about mission statements.

Michael Bolton:

I told those fudge-packers I liked Michael Bolton's music.

Peter Gibbons:

Oh, That is not right, Michael.

Purposeless self-serving inefficiency which is predicated on assured failure wastes time that humanity does not have - we do not have time for oppositional defiance induced mediocrity. Stop the gamification.

The Mindset of Being Simply Wrong

Instead of repeating the pattern described above, grow the fuck up and learn how to effectively learn from others, to learn from the past, to learn using a reinforcement framework of concept-action-processing. "Thems may be big words", but this is not a complicated concept. One does not have to choose the path of stupidity; ignorance - we're all ignorant in all manner of ways and that's inescapable; however when one ignores the data, ignores the lesson, and chooses the path before them via oppositional defiance, that's called "being stupid". It's stupid not to learn from others.

How Does This Relate to Memory

Memory is everything. The conscious experience, the neural network running inside our brains, the execution of "The Default Mode Network", requires effective memory - short term a well as consolidation to long-term memory.

So, when the industry was informed of the untimely discontinuation of Intel's Optane NVDIMMs... we were all screwed. Until Q2 2026. Now it's Q2 and finally CXL non-volatile memory is available in sufficiently equivalent density, performance, and lifespan to exceed Optane's role, and it is available in PCIe Gen-4 and Gen-5 and soon... Gen-6.

An Introduction to CXL

- It's not HBM, it's not 3D-Xpoint, it's not SRAM, nor vRAM

- CXL is packaged in PCIe, U.3, and EDSFF formats

- CXL offers dense capacity, low-latency, and exceptional I/O

- CXL is made for Ai/HPC/OLTP memory acceleration

- CXL is the new hotness, it is the new standard, it is performance

HPC Cluster Storage Acceleration

CXL is being built into (not next-gen, now-gen) storage appliances and network protocol accelerator systems used in High Performance Computing, Ai/ML which leverages HPC tech, and Supercomputers.

CXL in Telco DSP as Protocol Caches

CXL is perfectly suited for digital signals processing on a range of network protocols and their intra-chassis and inter-chassis RDMA driven networks. There's one person who I know that hates it when I bring up RDMA - truly an example of being willfully ignorant. Moving on...

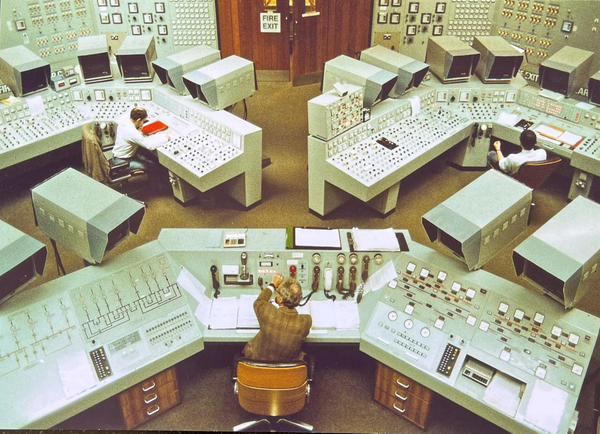

CXL Memory in Telco ATAC Chassis

- 14 slots in 19-inch rack ≈→ ∆ {$N total trays per 42U ≈ μ19}

- 16 slots in 23-inch ETSI rack ≈→ ∆ {$N total trays per 42U ≈ μ23}

- Chassis heights from 4U to 15U ≈↑ ∆ [[ μ4, μ19, μ23 ]]

- Telco grade Power & Cooling Efficiency & Redundancy ++

- Mil-DoD Compliant Physical & Operational Security ++

- Industrial NEBS Environmental Operating Ranges ++

- Zero-Downtime Serviceability & Upgrade/Maint ++

- Intra+Inter-Chassis Redundancy (Modular Scale) ++

- Geo-Deploy Designed for regional XOR continental mesh ++

So, Why Should We Listen to Her?

Becausee I've been building and iterating these types of storage architectures for nearly twenty years of my career. If you want to do it all from scratch or buy this type of storage performance for 10x the cost, go ahead; the market exists and you can be the big winner.

However, if you don't want to pay a premium for non-OpenSource storage clusters which are capable of serving and scaling from 10s to 100s of Petabytes, then this is the direction by which engineering solutions arrive at the datacenter. My storage designs range from Oracle's Exadata & Exascale clusters which have been adapted to run non-standard protocols, to fully purpose-built data-acceleration clusters which use COTS building blocks to provide similar performance and scale at a fraction of the cost (not including consulting, engineering scope, and top-to-bottom fully-automated storage architecture deployments).

As networking has improved, NAND designs have advanced, and costs have reduced on $/TB and $/Watt... yet always... always the storage sizes grow in density and capabilities. This is natural and expected.

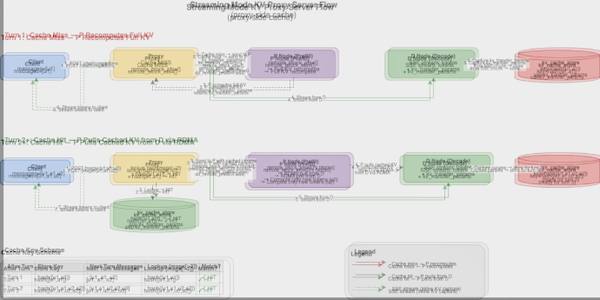

Project Coherent Storage, an Evolution

Six primary iterations, tracking the advancement of NAND acceleration, Network Acceleration, Protocol Offloading, Non-Volarile RAM, and now we see CXL capable of being deploying into reference architectures by which all others may be measured.

- Project Coherent Storage v1, (2010-2012)

- Project Coherent Storage v2, (2011-2015)

- Project Coherent NAND, (CMPLX.io) (2015-2017)

- Project Coherent IO-Max v1, w/ Optane PMem (2020-2024)

- Project Coherent IO-Max v2, w/ Optane PMem (2024-2025)

- Project Coherent Storage v3, w/ CXL AIC/U3 (2026)

Development Details - Concise Timeline

A little bit of engineering nerdery for those who care... and btw, the community myth that ZFS cannot fulfill a role as a multi-node clustered filesystem are wrong.

What About the "ZFS Can't Cluster" Myth?

The "Clustered ZFS" operating modality for multi-controller interactions (not limited to A/B active/passive or even active/active with modified pool-async hooks) requires advanced network hardware and engineering experience which most are not aware of, and so the myth perpetuates; but it is wrong and it has been wrong for as long as SAS drives have had dual-heads and shelves have had 4+ SFF connectors ready for multi-multipath-chaining - and enterprise NVMe drives are also available in this arrangement for the same purpose.

Project Coherent Storage v1 (2010-2012)

- Coherence Grid (DDR3 (Tier1)) + Custom Relational-Vector DBMS

- Sunfire ZFS controllers w. Multi-Protocol-Net Interlinks (IB, IPoIB, mNFS+, FC, FCoE)

- Geo-Dist-Nodes w. DDR3-PCIe SuperCap "Hot Cache Layer" (Tier2), w. Sunfire X4600/M2 @ 64 DIMMs Hotswap

- Geo-Dist-Nodes w. SAS SSDs (Tier3) + SAS Spinner (Tier4)

- Controller A/B+ nodes w. SAS-HBA to LTO-N (Tier5)

Project Coherent Storage v2 (2011-2015)

- Coherence Grid (DDR3 (Tier1)) + Relational-Vector DBMS

- Sunfire ZFS controllers w. Multi-Protocol-Net Interlinks (IB, IP Native L2/L3, mNFS+, FCoE)

- Geo-Dist-Nodes w. FusionIO Stacked "Hot Cache Layer" (Tier2)

- Geo-Dist-Nodes w. SAS SSDs (Tier3) + SAS Spinner (Tier4)

- Optional A/B nodes w. SAS-HBA to LTO-N (Tier5)

Project Coherent NAND - CMPLX.io (2015-2017)

- Coherence Grid DDR4 (Tier1) + Relational-Vector DBMS

- Native ZFS controllers w. Multi-Protocol-Net Interlinks (IB, IP Native L2/L3, mNFS+, iSER)

- Geo-Dist-Nodes w. NAND Stacked "Hot Cache Layer" (Tier2)

- Geo-Dist-Nodes w. SAS3 SSDs (Tier3) + SAS3 Spinner (Tier4) [+ optional SAS3 HBA to LTO for Iron Mountain (Tier5)]

- This architecture was a basis for one of my former startups, which was sold to private equity and built-upon for their HFT clusters under very tight NDA + Confidentiality Contract

Project Coherent IO-Max Storage Architectures v1, 2, 3

These storage architectures share core foundations, with iterations upward as Non-Volatile Memory was introduced on a wider scale into my designs. The initial design for v1 featured the first generation of Intel Optane 3D-XPoint (Series 100), v2 with Series-200, and now CXL taking the place of Optane PMem SKUs.

The details of each design from v1 and v2 may be expanded upon later, simply for historical purposes, and I have other things to do right now... but if you've made it this far into the post and want to see the specification ADRs and related research, which is RAG-pipelined and iteratively deployed on real hardware for revisions and regression testing to validate LLM Inference usage & advancements with the now-opensource Coherence-CE version... scroll down for a link to v3's repo.

- Project Coherent IO-Max v1, (2020-2023)

- Project Coherent IO-Max v2, (2023-2025)

- Project Coherent IO-Max v3, CXL (2026)