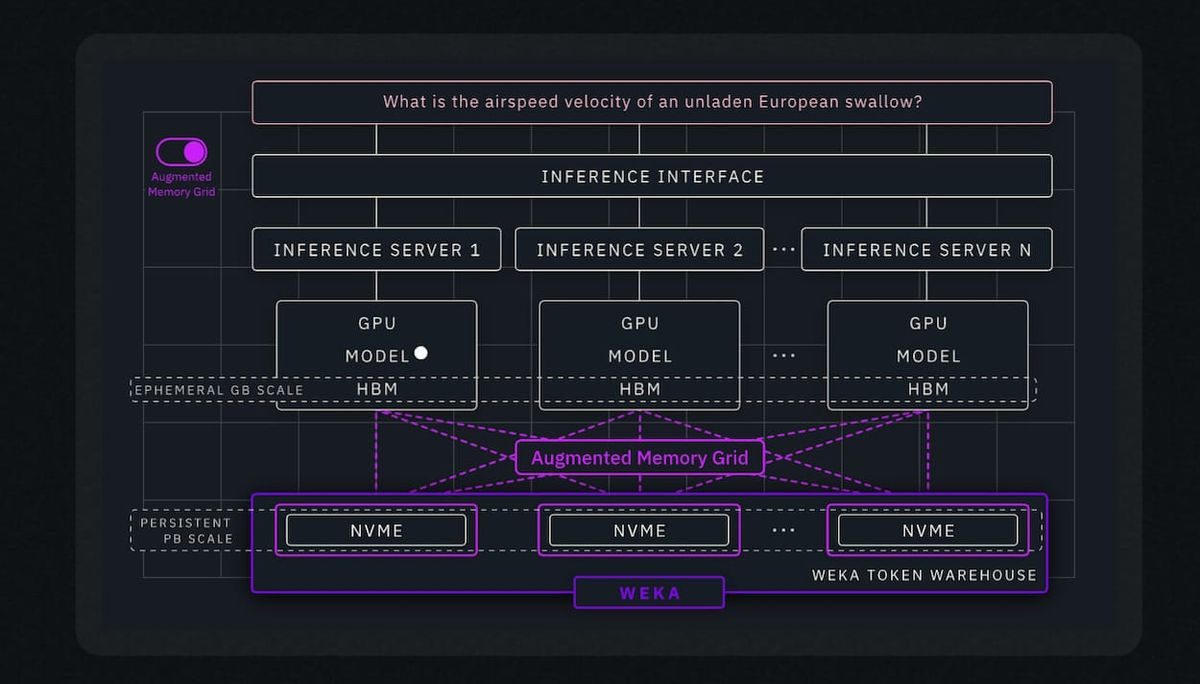

Query-Scoping Ai/ML Inference Storage Architectures

Q1-26 has been an interesting time - How does yours stack up?

Sometimes the industry provides intrigue and excitement, and sometimes it provides one with opportunities to enjoy face-to-face experiences with the raw humanity involved with entirely preventable system failures.

Leadership Asleep at the Wheel

Sometimes these failures occur when leadership exists in positions of elevated decision-power with little to zero experience handling specific engineering topics. The Lumberg Principle comes to mind when working with those which refuse to acknowledge their inexperience.

It's ok to be aware of ones limits and admit when one is wrong.

Too often these issues occur when management is not paying attention to the interpersonal activity of direct reports, or when the management never wanted to leave their former engineering roles and simply does not enjoy managing people - because they're not good at managing people. Other times it's due to "lead engineers" encounter new coworkers who have more effective engineering experience, stronger cognitive capabilities, and so on.

Unfortunately, solving those problems generally requires an entirely different set of tools and processes compared to storage engineering. Additionally, leadership which does not assist in soft-solving staff concerns will let their Org crumble before their eyes, and then blame everyone else – you know this because we've all been there and then moved on.

Scoping & Analysis Questions

- Do you know the engineering limitations of your Ai-Org's Inference Infrastructure? Do you care enough to want to know?

- Was OEM/ODM hardware acquired due to marketing hype on "Next-Gen Distributed Disaggregated Storage", without knowing that most of the implementations are based too heavily on "Low-Cost High-Perf" skew-manipulated metrics? (aka "future boat anchors", aka "no free lunch", aka "miracles do not exist", aka "a fool and their money part ways..." etc etc)

- Are you intimately familiar w. NVMe/NAND controller algos for wear-leveling, write amplification, and impact on Mass-Failure-Rates? Do you know how that firmware attempts to prevent drive failure at the expense of perf, longevity, consistency, queue management?

- Did your "Director" choose BOM SKUs without supporting lab-based load-testing of physical hardware, while refusing validation of the vendor's power-usage claims during simulation of I/O patterns for Peak/Low/Mean traffic?

- Did your "Director" (yes, still in quotes) get confused by your usage of Bollinger-Band/SMA volatility analysis as applied to dataset analytics reporting for growth probability trendlines?

- Did your CTO approve the Storage BOM but ignore economic stats (VIX, FRS + FED + FRED & CPI probability scores) which strongly impact seasonal "Inference Token Usage Tiering", leading to ORG's Site/Service/SaaS/etc throwing "rate-limited" or "API Limit Reached", perhaps more than once?

- Did you know that distributing multi-tenant I/O workloads across a single namespace while using high-capacity 👾QLC👾 drives as a means to offset low-TBW limits and failure probability, while opening the door for CISO compliance concerns.

- Did the Director demand BOM based on illusory & imaginative $/N*, without knowing any of the physics LUN, S3-Obj, mNFS / pNFS, or iSER protocol driven storage requirements ahead of time?